SoundSense

Biomimetic AR that maps the auditory world into spatial vision for the hearing-impaired community.

Best Use of Edge AI — MIT Reality HackOverview

A biomimetic assistive technology using Arduino and Meta Quest 3 that translates auditory information into spatial visual cues, enabling Deaf and hearing-impaired individuals to perceive sound with spatial awareness.

Problem

Deaf and hearing-impaired individuals don't just miss sound, they miss the spatial context it carries. Knowing a car is approaching matters less than knowing which direction it's coming from.

This meant we weren't building another transcription tool. We were building a spatial awareness layer.

Our solution

We built a wearable AR system that captures sound in real time, identifies its source, and renders spatial visual cues directly in the user's field of view.

Spatial Awareness in AR

We came together with a question

How have AR been used and what are the opportunities for accessibility that is rarely explored?

An Overlooked Space

AR/VR innovation clusters around edtech, entertainment, and gaming. Accessibility remains relatively unexplored. AR's strength is augmenting real-time perception of physical environments. For individuals with hearing impairment, that could mean spatial awareness by understanding where sound comes from, not just what it says.

Invisible Challenge

Most assistive tech translates sound into text or volume. We wanted to focus on this missing piece in the 3 days hackathon. Ambient cues like a car approaching, someone calling your name from behind form an invisible communication layer hearing individuals navigate unconsciously. For those with hearing impairment, the loss isn't just sound, but spatial awareness.

Problem statement

How can we prioritize awareness over verbosity to give users spatial sound awareness?

Biomimicry From Barn Owls

Barn owls navigate darkness by mapping sound spatially, not by volume. We applied the same approach: visualize where sound comes from, not just what it says. This shift moved us from speech-to-text captions toward spatial awareness, helping users understand their acoustic environment, not just transcribe it.

Day One: Scoping Under Pressure

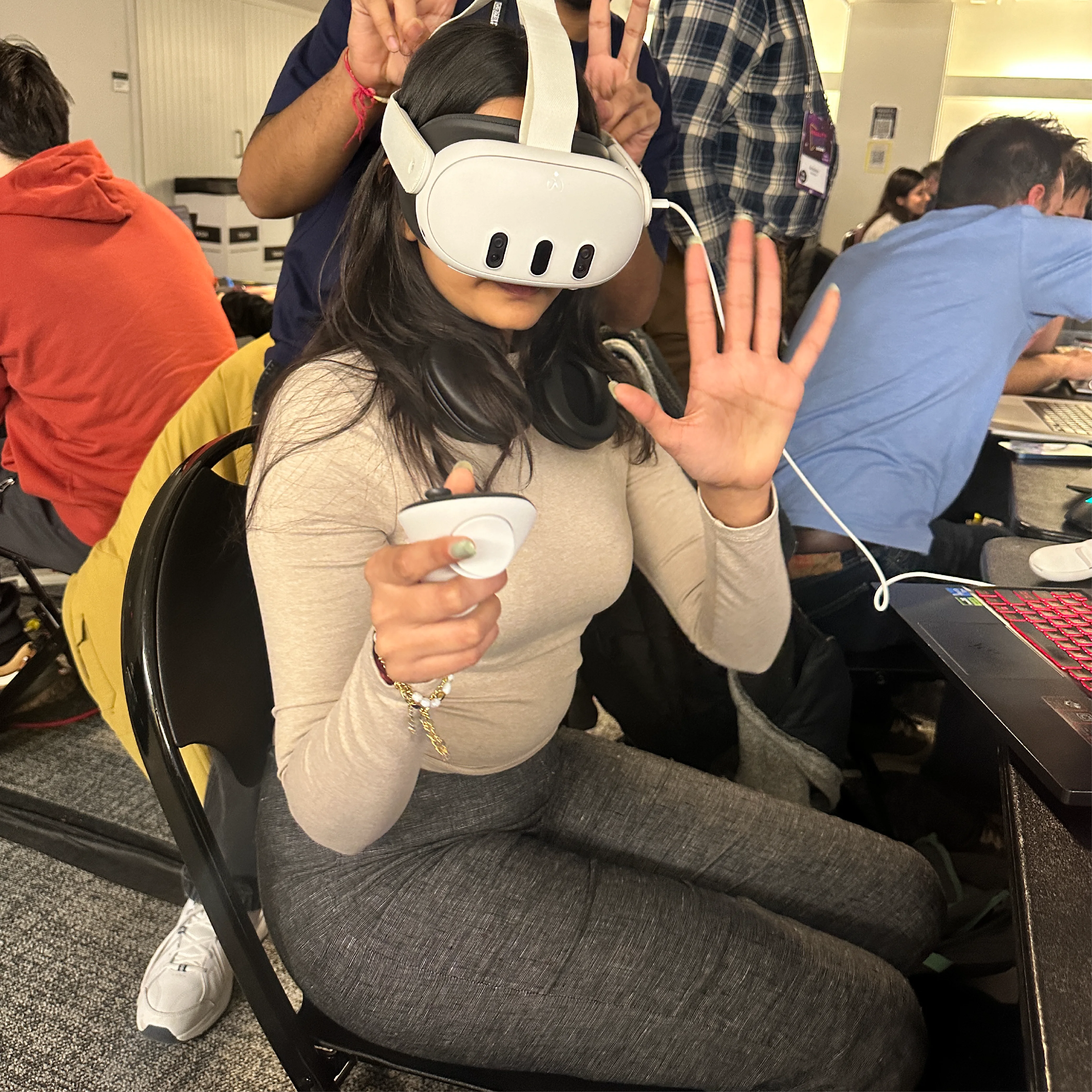

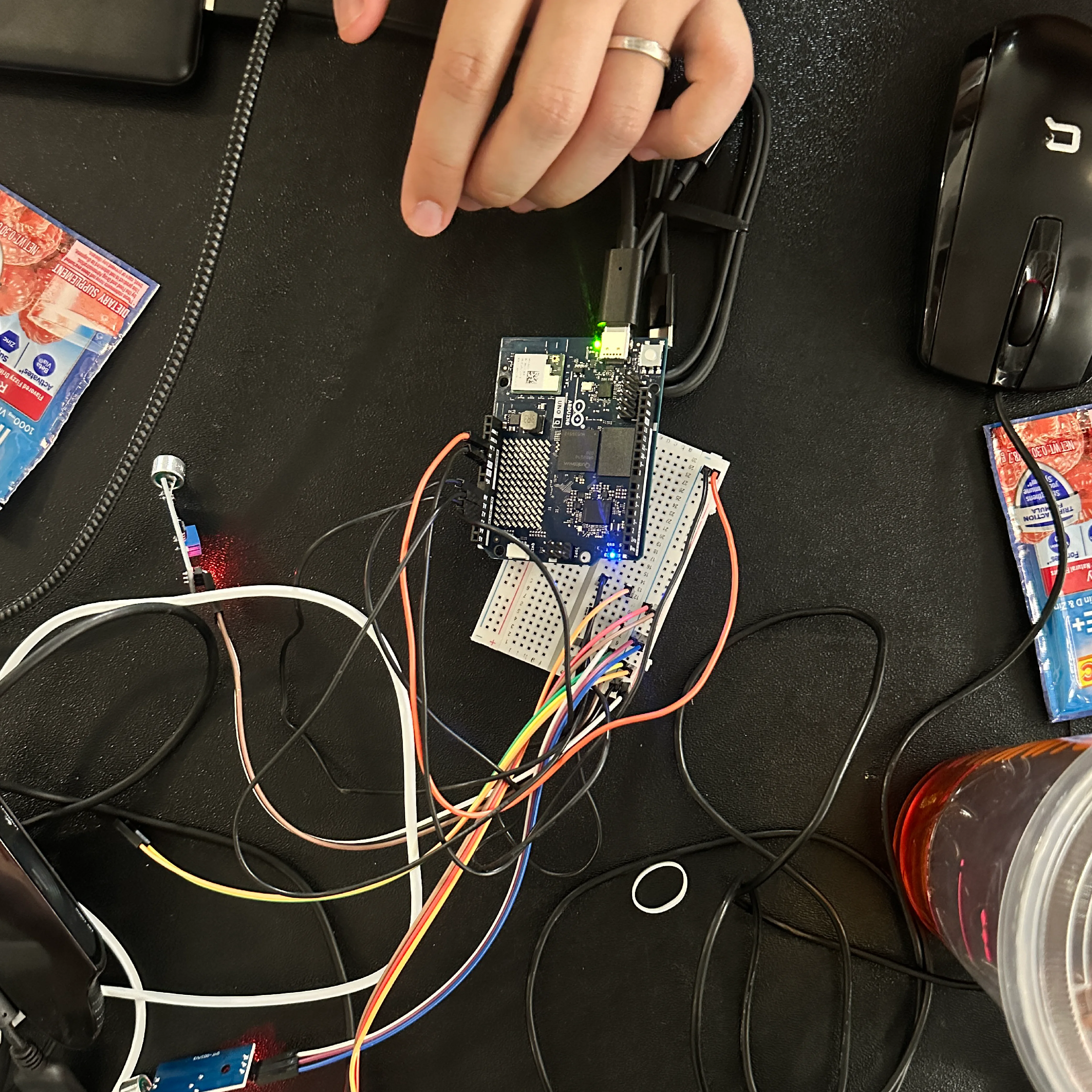

We spent the first day scoping the project. We decided to use a wearable sound sensor array and an Arduino Uno Q for processing. We also decided to use a Meta Quest 3 for AR visualization.

System Architecture

The technical architecture came together quickly: wearable sound sensors positioned around the neck, an Arduino Uno Q for processing, machine learning for audio classification, and Meta Quest 3 for AR visualization. Each component had to work independently, but more importantly, they had to work together.

Day one focused on feasibility. We built a basic sensor array that could detect sound and transmit to the Arduino.

The Core Question

The system answers three questions: When is someone communicating? Where are they? What needs attention versus background noise? This wanted to move away from solely speech transcription toward situational communication.

Day Two: Making It Work

MIT Hackathon

We positioned wearable sound sensors around the neck, close to where attention naturally sits. Individuals with hearing impairment already possess refined spatial intuition. We wanted to leverage existing senses rather than replace them.

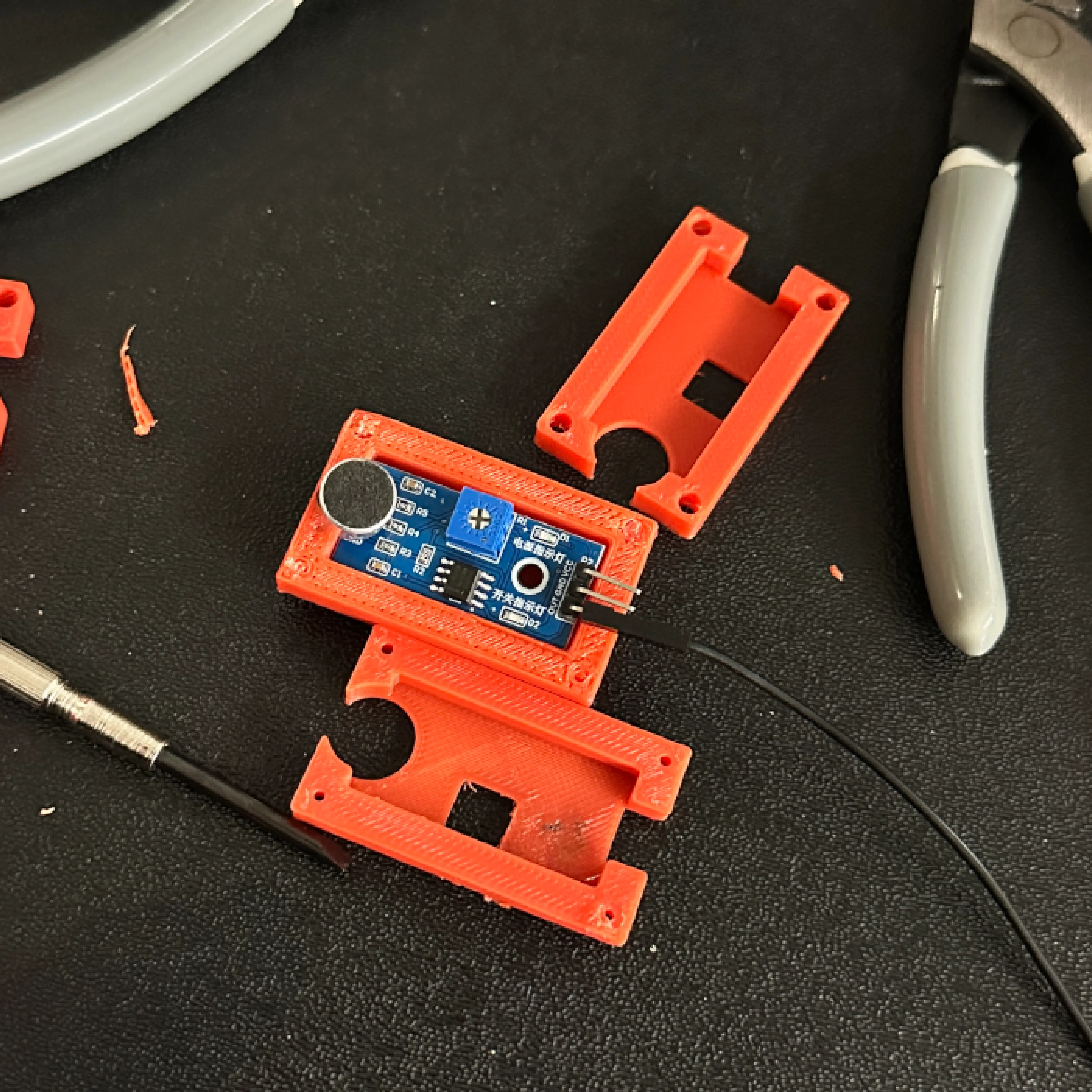

These sensors form an acoustic array connected to an Arduino Uno Q. The system prioritizes loudest and closest sounds using physical proximity as the filtering mechanism as the most MVP version

Machine Learning Audio Classification

Filtered audio data is processed using Google's Audio Classification model. We wanted the system to capture a wide range of sounds, creating an audioscape rather than just transcribing speech. Once spoken language is detected, it's transcribed using speech-to-text.This ensures the system supports interaction rather than overwhelming the user with constant feedback.

Day Three: When Things Broke

MIT Hackathon

Building the project in three days required rapid cross-disciplinary collaboration and improvisation. The 3D printer failed repeatedly. The night before presenting, we were still trying to get a working enclosure for the sensor array.

Rather than perfecting individual components, we focused on making them work together: sensing, interpretation, and visualization as one coherent system.

Presentation Day

MIT Hackathon

We presented the project at MIT's Reality Hack, winning Best Use of Edge AI.

Project Impact

Expand

the definition of environmental awareness beyond auditory perception, making space legible through multiple sensory channels.

Shift

how assistive technology is designed, from compensating for absence to augmenting spatial understanding for all.

Establish

a new interface layer between humans and their environment, where sound becomes spatial data.

What I learned

Accessibility and human-centric design

SoundSense reinforced designing for natural intuition and empowerment rather than translation alone—a shared sensory layer that builds on what users already have.

Building under pressure

Coordinating hardware, ML, and AR in three days taught me to trust the process and integrate as we went instead of perfecting each part first.

Awareness over verbosity

Prioritizing spatial awareness over exhaustive transcription gave us a clearer product direction and a more focused system.

Cross-disciplinary collaboration

Sensors, classification models, 3D printing, and AR had to work together; we learned that coherence across the system mattered more than any single component.